We’re within the period of vibe coding, permitting synthetic intelligence fashions to generate code based mostly on a developer’s immediate. Sadly, underneath the hood, the vibes are unhealthy. According to a recent report revealed by information safety agency Veracode, about half of all AI-generated code accommodates safety flaws.

Veracode tasked over 100 completely different giant language fashions with finishing 80 separate coding duties, from utilizing completely different coding languages to constructing several types of purposes. Per the report, every process had recognized potential vulnerabilities, which means the fashions might probably full every problem in a safe or insecure manner. The outcomes weren’t precisely inspiring if safety is your prime precedence, with simply 55% of duties accomplished in the end producing “safe” code.

Now, it’d be one factor if these vulnerabilities have been little flaws that might simply be patched or mitigated. However they’re usually fairly main holes. The 45% of code that failed the safety verify produced a vulnerability that was a part of the Open Worldwide Application Security Project’s top 10 safety vulnerabilities—points like damaged entry management, cryptographic failures, and information integrity failures. Mainly, the output has sufficiently big points that you just wouldn’t wish to simply spin it up and push it reside, until you’re seeking to get hacked.

Maybe probably the most attention-grabbing discovering of the research, although, will not be merely that AI fashions are often producing insecure code. It’s that the fashions don’t appear to be getting any higher. Whereas syntax has considerably improved over the past two years, with LLMs producing compilable code almost on a regular basis now, the safety of mentioned code has mainly remained flat the entire time. Even newer and bigger fashions are failing to generate considerably safer code.

The truth that the baseline of safe output for AI-generated code isn’t enhancing is an issue, as a result of the usage of AI in programming is getting more popular, and the floor space for assault is rising. Earlier this month, 404 Media reported on how a hacker managed to get Amazon’s AI coding agent to delete the recordsdata of computer systems that it was used on by injecting malicious code with hidden directions into the GitHub repository for the device.

In the meantime, as AI brokers develop into extra widespread, so do agents capable of cracking the very same code. Current research out of the College of California, Berkeley, discovered that AI fashions are getting superb at figuring out exploitable bugs in code. So AI fashions are persistently producing insecure code, and different AI fashions are getting actually good at recognizing these vulnerabilities and exploiting them. That’s all in all probability nice.

Trending Merchandise

SAMSUNG 27″ CF39 Series FHD 1...

TP-Link AXE5400 Tri-Band WiFi 6E Ro...

ASUS 31.5â 4K HDR Eye Care Mon...

Wireless Keyboard and Mouse Combo, ...

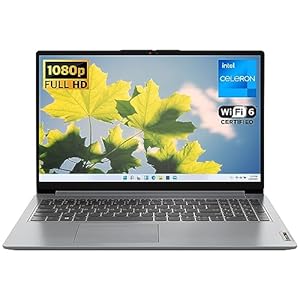

Lenovo IdeaPad 1 Student Laptop, In...